Here's a link that shows the WWVB code format: https://www.nist.gov/pml/div688/grp40/wwvbtimecode.cfm

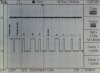

My understanding is that at point A, the signal intensity drops precisely on the second. However, there is a correction (UT1) factor included in the code.

Assume a user has a receiver is synchronized to the WWVB signal and detects the instant of the high to low transition. Why is the error byte in the hundreds or milliseconds? My quess is that the correction is what the accumulated "leap second" would be that has not yet been taken into account. Is that correct?

John

My understanding is that at point A, the signal intensity drops precisely on the second. However, there is a correction (UT1) factor included in the code.

Assume a user has a receiver is synchronized to the WWVB signal and detects the instant of the high to low transition. Why is the error byte in the hundreds or milliseconds? My quess is that the correction is what the accumulated "leap second" would be that has not yet been taken into account. Is that correct?

John